Why Automation Projects Stall After Pilot Phase

- Feb 20

- 13 min read

The strategic failure that defines enterprise automation is not technical — it is structural. Over 80% of AI and automation projects fail to reach production, according to RAND Corporation's 2024 research — twice the failure rate of traditional IT initiatives. This disparity is not explained by insufficient technology maturity or inadequate vendor capabilities. The technology works. The pilot demonstrates value. The business case is validated. And yet, at the moment of organizational commitment required to move from proof of concept to scaled deployment, the majority of initiatives collapse under the weight of factors that were never addressed during the pilot phase itself: governance misalignment, stakeholder turnover, resource reallocation friction, and the systemic organizational resistance that emerges when a process change threatens established power structures.

The economic cost of this organizational failure mode is measurable and accelerating. Gartner predicts that 30% of generative AI projects will be abandoned after proof of concept by the end of 2025, driven by poor data quality, escalating costs, and unclear business value — all factors that should have been resolved before pilot initiation, not discovered during scale-up attempts. More broadly, 70% of digital transformation initiatives fail to meet their objectives, costing organizations an estimated $2.3 trillion annually in wasted investment. The pattern is consistent across industries, geographies, and automation categories: organizations excel at validating that automation is possible but systematically fail at the organizational redesign required to make it permanent.

The thesis this analysis advances is organizational: Intelligent Process Automation success is not determined by the quality of the pilot — it is determined by the quality of the organizational architecture into which the pilot must integrate. The companies that successfully scale automation beyond proof of concept are not those with the most sophisticated technology deployments. They are those that have resolved governance ambiguity, aligned stakeholder incentives, secured executive sponsorship that survives personnel turnover, and embedded change management discipline into the automation roadmap before the first line of code is written. The sections that follow deconstruct the structural failure modes that stall automation after pilot, the organizational preconditions required to prevent those failures, and the operational framework through which automation transitions from validated capability to scaled institutional asset.

01 —

The Organizational Readiness Gap — Why Technical Validation Is Necessary but Not Sufficient

The fundamental error in automation program design is the assumption that organizational readiness follows automatically from technical validation. A successful pilot demonstrates that a process can be automated. It does not demonstrate that the organization is prepared to automate it at scale — and the gap between these two states is where the majority of automation investments are lost. Technical readiness addresses whether the automation platform can execute the target process reliably. Organizational readiness addresses whether the institution possesses the governance clarity, stakeholder alignment, data infrastructure, and change capacity required to integrate that automated process into ongoing operations. The former can be validated in weeks. The latter requires months of deliberate organizational architecture work that most enterprises treat as an afterthought rather than a precondition.

Nearly two-thirds of organizations remain stuck in "pilot mode," unable to scale automation projects across the enterprise, according to McKinsey's comprehensive automation research — with BCG finding that 74% have yet to show tangible value from their AI and automation efforts.

The organizational readiness assessment that should precede any pilot-to-production decision must evaluate six non-negotiable dimensions. First, executive sponsorship durability: is the champion who approved the pilot still in role, still committed, and still empowered to allocate resources for scale-up? Second, governance alignment: have the cross-functional stakeholders whose cooperation is required for scaled deployment formally committed to process redesign, or are they passively observing the pilot with no obligation to support its expansion? Third, resource commitment: has the organization secured budget, headcount, and technical infrastructure for the multi-year journey from pilot to maturity, or is funding contingent on quarterly budget cycles that can be redirected at any time? Fourth, data quality and availability: does the production data environment match the clean, curated data set used during the pilot, or will the automation encounter data issues at scale that were never surfaced during proof of concept? Fifth, change management capacity: does the organization possess the training infrastructure, communication discipline, and stakeholder engagement muscle required to transition hundreds or thousands of employees from manual to automated processes? Sixth, risk tolerance: is leadership prepared to accept the operational disruption, initial performance degradation, and cultural resistance that accompany any significant process transformation, or will the first sign of friction trigger a retreat to legacy processes?

Organizations that conduct this readiness assessment before pilot initiation design their proof of concept to address organizational barriers, not merely technical ones. Those that conduct it only after pilot success discover that the organizational architecture required for scale does not exist — and that building it retroactively, under the political pressure of an already-validated business case, is exponentially more difficult than establishing it as a precondition. The strategic implication is decisive: Intelligent Process Automation must be treated as an organizational transformation initiative with a technical component, not a technical initiative with an organizational afterthought.

02 —

The Stakeholder Turnover Problem — When Institutional Knowledge Walks Out the Door

The organizational pathology that most reliably destroys automation scale-up is executive and stakeholder turnover. A pilot is typically championed by a specific individual — a director, VP, or C-level executive who understands the process being automated, believes in the strategic value of transformation, and possesses the political capital to secure pilot funding and cross-functional cooperation. When that individual leaves the organization, is promoted to a different function, or is reassigned to a higher-priority initiative, the institutional knowledge, political support, and resource commitment they represented evaporates. The replacement executive inherits a validated pilot but not the context, conviction, or incentive structure that drove its creation. The result is predictable: the automation initiative stalls, not because it failed technically, but because it lost its organizational champion.

The data on organizational turnover's impact on technology initiatives is unambiguous. Research on digital transformation failures identifies stakeholder turnover as a primary cause of project stagnation, with knowledge loss and continuity disruption creating cascading delays that compound over time. In automation contexts specifically, the turnover problem is particularly acute because automation pilots are often multi-month initiatives that span performance review cycles, reorganizations, and strategic priority shifts. A pilot initiated in Q1 under one executive sponsor may reach readiness for scale-up in Q4 under an entirely different leadership structure with different strategic priorities and different tolerance for operational risk.

The operating model for mitigating stakeholder turnover risk requires three structural interventions. First, institutionalize the automation business case at the executive committee or board level, ensuring that the strategic rationale and resource commitment are documented in governance materials that survive individual personnel changes. Second, establish a cross-functional automation steering committee with representation from all impacted functions, distributing ownership across a group rather than concentrating it in a single champion who can leave. Third, build explicit knowledge transfer protocols into the automation program plan, requiring documented decision logs, stakeholder analysis, and implementation playbooks that enable continuity when turnover occurs. Organizations that implement these safeguards treat automation as an institutional asset from pilot initiation. Those that do not discover that their validated pilot becomes an orphaned project the moment its champion exits — and that resurrecting organizational commitment after that exit is exponentially more difficult than preventing the dependency in the first place.

03 —

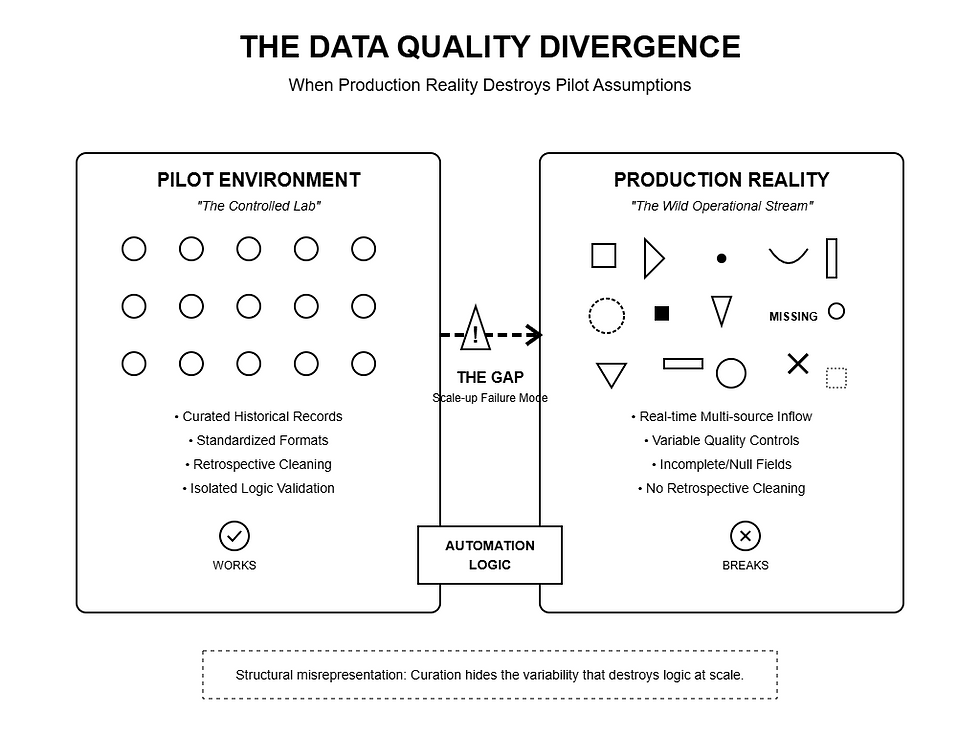

The Data Quality Divergence — When Production Reality Destroys Pilot Assumptions

The technical failure mode that most reliably emerges during automation scale-up is the data quality gap between pilot and production environments. Pilots are typically executed on curated data sets — historical records that have been cleaned, standardized, and validated to eliminate the variability, incompleteness, and inconsistency that characterize real-world operational data. This curation is necessary to isolate the automation logic and validate that the technology can execute the target process. But it creates a structural misrepresentation of the data environment the automation will encounter in production, where data arrives in real time, from multiple source systems, with varying quality controls and no opportunity for retrospective cleaning.

The empirical evidence on data quality as the primary cause of automation failure is overwhelming. Gartner research confirms that 85% of AI projects fail due to poor data quality, with inadequate data availability and quality issues creating cascading failures during scale-up that were never surfaced during pilot validation. The specific failure pattern is consistent: the pilot executes flawlessly on historical data, stakeholders approve scale-up based on that performance, production deployment begins, and the automation immediately encounters data issues — missing fields, inconsistent formats, duplicate records, referential integrity violations — that degrade performance to levels below manual processing. The organization responds by pausing deployment, initiating a data remediation project, and discovering that achieving production-grade data quality requires months or years of data governance investment that should have been completed before the pilot was initiated.

60% of AI and automation projects will be abandoned due to lack of AI-ready data, according to Gartner's 2025 forecast — underscoring that data readiness is not a technical prerequisite but the foundational determinant of whether automation can transition from pilot to production.

The operational model for preventing the data quality divergence requires that organizations conduct production data profiling before pilot initiation, not after pilot validation. This profiling assesses the completeness, consistency, accuracy, and timeliness of the actual data that the automation will process at scale — and surfaces the data quality issues that must be resolved before production deployment is viable. If profiling reveals that 30% of invoice records are missing vendor identifiers, or that customer records contain inconsistent address formats across regional systems, or that transaction timestamps are unreliable due to legacy system integration issues, those deficiencies must be remediated during the pilot phase, with the pilot designed explicitly to test the automation's resilience to real-world data variability. Organizations that follow this discipline treat the pilot as a joint validation of both the automation logic and the data infrastructure. Those that skip it discover during scale-up that their pilot validated a capability that cannot operate in the environment where it must actually be deployed — a failure that is organizational, not technical, and that reflects inadequate preparation rather than inadequate technology.

04 —

The Change Management Deficit — When Organizations Deploy Automation Without Preparing People

The organizational resistance that emerges during automation scale-up is rarely irrational — it is the predictable consequence of deploying process change without investing in the stakeholder preparation, training infrastructure, and communication discipline required to enable adoption. Employees whose processes are being automated experience that change as a direct threat: to their job security, their professional identity, their perceived competence, and their organizational relevance. When automation is introduced without addressing those concerns explicitly — through transparent communication about workforce transition plans, retraining opportunities, and the value proposition of automation for individual contributors, not merely for the enterprise — resistance is not a failure of change management. It is a rational response to institutional indifference.

The data on change management as the determinant of automation success is unambiguous. McKinsey research confirms that organizations investing in cultural change alongside technology deployment see success rates 5.3 times higher than those focused exclusively on technology — a variance that reflects the reality that automation adoption is not a technical deployment challenge but a human systems integration challenge. BCG's analysis of AI and automation scaling identifies the "10-20-70 principle": automation success is 10% algorithms, 20% data and technology, and 70% people, processes, and cultural transformation. Organizations that win fundamentally redesign workflows and invest in stakeholder preparation. Those that fail attempt to automate legacy processes without addressing the organizational context in which those processes are embedded.

The change management architecture required to enable automation scale-up must be designed into the program from inception, not added reactively when resistance emerges. This architecture includes five core components. First, transparent communication about the strategic rationale for automation, the expected workforce impact, and the organization's commitment to redeployment and retraining rather than elimination. Second, comprehensive skills assessment and development programs that prepare employees for the higher-value work they will perform once routine tasks are automated. Third, phased deployment that enables iterative learning and adjustment rather than enterprise-wide rollout that overwhelms the organization's absorption capacity. Fourth, formal feedback mechanisms that surface adoption barriers in real time and enable leadership to address concerns before they metastasize into organized resistance. Fifth, visible executive sponsorship that signals automation as a strategic priority worthy of sustained investment, not a cost-cutting initiative that will be reversed at the first sign of difficulty. Organizations that implement this architecture treat Intelligent Process Automation as a workforce transformation initiative requiring deliberate human capital investment. Those that omit it discover that their technically sound automation fails for reasons that have nothing to do with the technology itself.

05 —

The Governance Ambiguity Problem — When Accountability Disappears at Scale

The structural failure that distinguishes successful automation scale-up from stalled initiatives is governance clarity. A pilot operates within a controlled environment with clear ownership, dedicated resources, and explicit success criteria. Production deployment requires integration into the organization's permanent operating model — which means establishing who owns the automation platform, who is accountable for its performance, who approves process changes, who resolves exceptions, and who funds ongoing maintenance and enhancement. When these governance questions are deferred rather than resolved, automation stalls not because it does not work but because no one has clear authority to make it permanent.

The governance ambiguity that kills automation scale-up manifests in three patterns. First, ownership ambiguity: IT views the automation platform as a business process tool that business functions should own, while business functions view it as a technology asset that IT should manage. The result is that no function takes ownership, and the platform becomes an orphaned capability that degrades over time as technology dependencies evolve and business requirements change. Second, funding ambiguity: the pilot was funded through innovation budgets or discretionary allocations that do not carry forward into the permanent operating budget. When scale-up requires sustained investment in infrastructure, support, and enhancement, no budget owner accepts accountability for those costs, and the automation initiative starves for resources despite validated ROI. Third, performance accountability ambiguity: when automated processes generate exceptions or errors, it is unclear whether those issues should be resolved by process owners, platform administrators, or the automation vendor — and the resulting finger-pointing delays resolution and erodes stakeholder confidence in the automation's reliability.

The governance framework required to prevent this ambiguity must be established before pilot-to-production transition begins. This framework must explicitly designate a permanent platform owner with budget authority and performance accountability, establish a cross-functional steering committee with authority to approve process changes and resolve exceptions, define escalation paths for technical and business issues, and document the ongoing funding model that ensures the automation receives the resources required to sustain performance as business volumes and requirements evolve. Organizations that implement this governance architecture treat automation as a permanent institutional capability requiring formal operating procedures. Those that defer governance questions until after deployment discover that the absence of clear accountability is sufficient to prevent adoption regardless of how well the technology performs — and that establishing governance retroactively, after stakeholders have already formed assumptions about who is responsible for what, is exponentially more difficult than codifying it before those assumptions calcify.

06 —

The Phased Deployment Discipline — Why Enterprise-Wide Rollout Is Organizational Malpractice

The deployment strategy that most reliably converts a successful pilot into a failed scale-up is enterprise-wide rollout. The logic behind this approach is superficially appealing: the pilot validated the automation, the business case is approved, and deploying everywhere simultaneously maximizes speed to value. The reality is that enterprise-wide deployment exposes the entire organization to automation risk simultaneously, eliminates the opportunity to learn from early deployment issues before they propagate, and overwhelms the organization's capacity to absorb change. The result is predictable: performance issues that could have been resolved through iterative refinement instead trigger enterprise-wide disruption, stakeholder confidence collapses, and leadership halts deployment — leaving the organization with a partially automated environment that is more dysfunctional than the manual baseline it replaced.

The phased deployment model that prevents this failure operates through deliberate geographic, functional, or process segmentation. Rather than deploying automation to all locations, departments, or transaction types simultaneously, the organization selects a limited scope for initial production deployment — a single region, a single business unit, a single customer segment — and treats that deployment as a learning environment where issues can be identified, resolved, and incorporated into the deployment playbook before expansion. This phased approach reduces risk, enables iterative refinement, and builds organizational confidence through visible early wins that demonstrate the automation's viability at scale. Leading transformation frameworks now insist on a disciplined sequence: define the problem, standardize processes, establish governance, then deploy technology — with deployment itself following a "start small and scale" strategy that minimizes disruption and maximizes learning.

The operational discipline required for effective phased deployment includes three core practices. First, explicit phase gates that prevent progression to the next deployment phase until success criteria from the current phase are met — ensuring that issues are resolved before they compound. Second, structured learning capture that documents what was discovered during each phase and how the deployment approach was adjusted in response, creating an institutional knowledge base that accelerates subsequent phases. Third, stakeholder engagement that maintains transparency about deployment progress, issues encountered, and remediation actions taken — building confidence that leadership is managing the transformation responsibly rather than forcing automation regardless of operational impact. Organizations that follow this discipline treat automation scale-up as a multi-year journey requiring sustained executive commitment and operational rigor. Those that rush to enterprise-wide deployment discover that their haste to capture value creates organizational trauma that makes subsequent automation initiatives exponentially more difficult to approve, regardless of their technical merit.

Strategic Imperatives - The Organization Is the Automation Infrastructure

The automation initiatives that will succeed in the next five years are not those with the most sophisticated technology — they are those embedded in organizations that have resolved governance ambiguity, secured executive sponsorship that survives personnel turnover, established data quality as a precondition rather than an afterthought, and built change management discipline into their transformation roadmap from inception. The evidence is structural and compounding: 80% of automation projects fail to reach production, nearly two-thirds of organizations remain stuck in pilot mode unable to scale, and 30% of generative AI projects are abandoned after proof of concept. This is not a technology maturity problem. It is an organizational architecture problem, and it requires structural intervention at the level of how automation programs are governed, resourced, and integrated into permanent operations.

The strategic imperative this creates is both urgent and specific. Every organization with validated automation pilots that have not scaled must ask a disqualifying question: if the technical capability exists and the business case is validated, what organizational barriers are preventing deployment — and why were those barriers not addressed before pilot initiation? For most, the honest answer reveals that the pilot was designed to validate technology feasibility, not organizational readiness, and that the governance, stakeholder alignment, data quality, and change management infrastructure required for production deployment was never established. This gap is not a project management oversight. It is a strategic design failure that reflects treating automation as a technical initiative rather than an organizational transformation requiring deliberate institutional preparation.

The operating model for resolving that gap is clear and executable. It begins with organizational readiness assessment before pilot approval, ensuring that executive sponsorship, stakeholder alignment, governance clarity, data quality, and change management capacity are established as preconditions for pilot funding. It continues with formal knowledge transfer protocols and distributed ownership structures that protect the initiative from stakeholder turnover. It proceeds through phased deployment that enables iterative learning and adjustment rather than enterprise-wide rollout that overwhelms organizational absorption capacity. And it culminates in permanent governance frameworks that embed automation into the organization's operating model, ensuring that platforms receive the resources, accountability, and executive attention required to sustain performance as business requirements evolve over time.

The market does not reward organizations that validate automation capabilities but fail to deploy them at scale. It rewards those that treat automation as an organizational asset requiring deliberate institutional architecture — governed, resourced, and managed with the same rigor applied to any permanent business capability. The organizations that act on this principle today will convert their validated pilots into scaled competitive advantages. Those that continue treating automation as a technology experiment will continue generating proof-of-concept successes that deliver zero institutional value.